KODA

The brief

A high-output performance team can burn a week just orchestrating one video ad — brief in Notion, storyboard in Figma, edit in Premiere, voiceover from a freelancer, music from a library, deliver to media buyer, repeat. The cost of producing each variant is so high that teams under-test, which is exactly the wrong move on Meta and TikTok, where creative refresh is the single biggest performance lever.

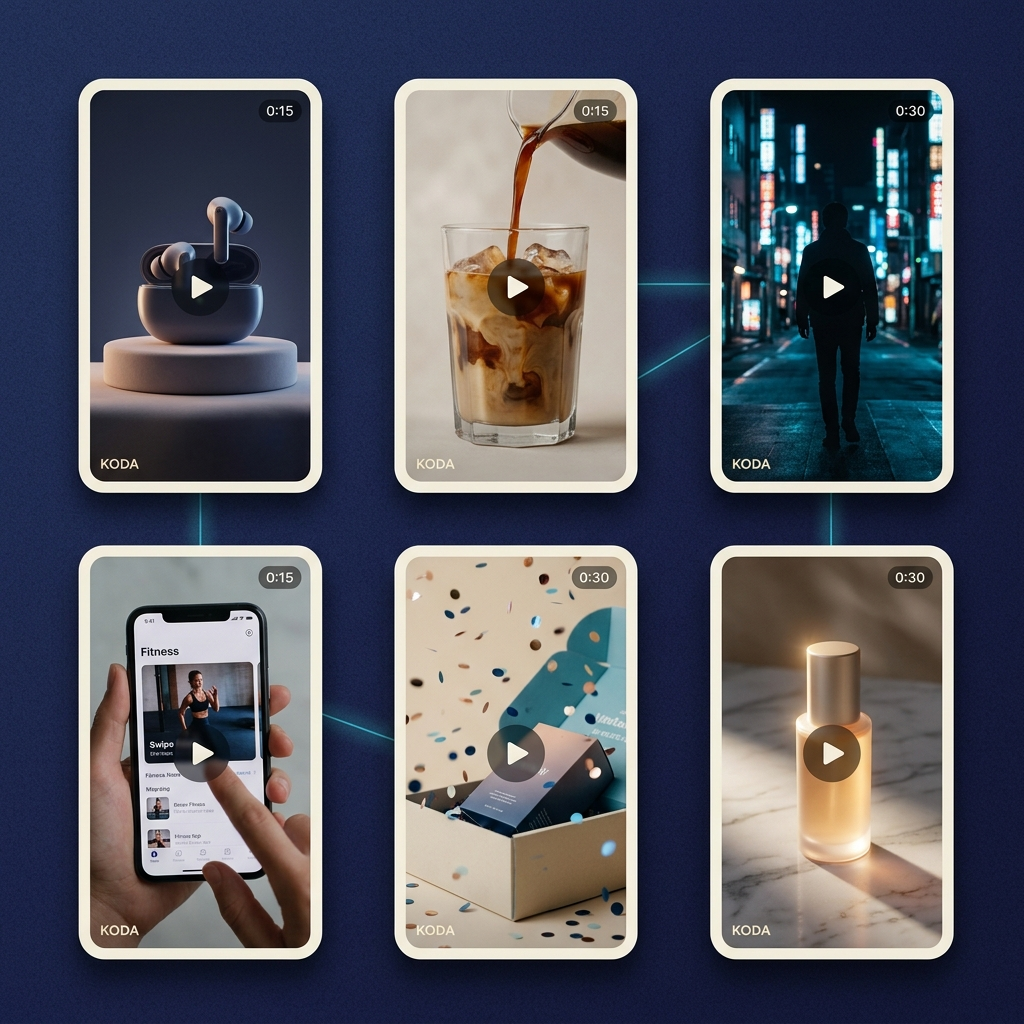

KODA's premise: turn the entire production stack into a single visual workflow, where every step is an AI agent and every artefact is inspectable. Operators drag nodes onto an infinite canvas, connect them, and ship a finished, render-ready video ad in hours.

An infinite canvas of specialized agents

Each node on the canvas is a narrowly-scoped agent with a typed input contract, a typed output contract, and a deterministic evaluation gate. Connecting two nodes is not just a UI affordance — it's a structured handoff that the runtime can validate, replay, branch, and roll back.

- Prompt node — accepts a campaign brief and emits a structured creative request (hook, audience, format, duration, tone).

- Storyboard node — turns the creative request into a frame-by-frame plan with shot type, camera intent, and on-screen copy per beat.

- Image node — generates each storyboard frame at production resolution, honoring brand tokens and product reference images.

- Voice node — synthesizes voiceover with selectable voice profiles, paced to the storyboard timing.

- Music node — selects or generates a backing track keyed to mood, BPM, and ad duration, with sidechain ducking under the voice.

- Compose node — assembles frames, voice, music, captions, and motion into the final timeline, then hands off to the render layer.

Branching, forking, and parallel variants

Because every node is structured, operators can fork the graph at any point — change the storyboard, leave the voice, regenerate the imagery — and KODA runs the downstream nodes in parallel. A single brief routinely produces a dozen finished variants on the same canvas, each fully traceable to the upstream choices that produced it.

The render layer

The compose node hands off to a render pipeline that produces broadcast-ready MP4s at every aspect ratio (9:16, 1:1, 4:5, 16:9) needed for Meta, TikTok, and YouTube placements. The pipeline runs on a GPU-backed queue with prioritization, retry, and resumable jobs, so a campaign push of 40 variants finishes in minutes rather than hours.

Human control at every junction

Every node exposes a preview pane and a parameter inspector — copywriters can rewrite a hook before it ever reaches the image node; an art director can approve or replace a frame before voice is generated. Nothing is a black box, and nothing is irreversible. The agent does the work; the operator keeps the taste.

What we shipped

- The visual editor — infinite canvas, node library, parameter inspector, version history, and shareable graphs.

- The agent runtime — typed contracts, evaluation harness, replay, and full audit logs for every generation.

- A GPU-backed render pipeline producing MP4 deliverables at every standard ad placement ratio.

- A workspace layer — projects, brand kits, asset libraries, and per-client permission scopes.

The result

KODA collapses a three-week traditional production cycle into a four-hour workflow with the same finishing quality. Teams now ship dozens of variants per campaign instead of one, A/B-test hooks and music in real time, and have full provenance from finished spot back to the originating brief — a 10× lift in ad iteration speed with no loss of creative control.